April 28, 2024

I was asked today what I thought about artists protecting their artwork online. The artist had received an email recommending the use of “poisoning”, watermarks and copyright as methods of protecting artwork online.

“Can I prevent people copying my work if I put it on my website?” is a question that artists have asked since artists first began putting their work on websites—and watermarks and copyright have always been part of this question.

“Poisoning” is the newest of the suggestions—and one that I don’t recommend using, for ethical reasons— but I’ll address the practical implications of all three first.

COPYRIGHT

Regarding copyright, this one is easy as the game has not changed. The law regarding copyright is that if your work is not copyrighted you can still sue someone who copies it in a way that infringes “fair use”—but the damages you recover may be limited (eg only to the extent that they have made a profit from their copy). If your work is copyrighted, you can sue the person who’s copied your work for up to $150,000 (regardless of whether or not the infringer has made a profit from the copy) plus your attorney fees (if you win the lawsuit). And copyright protection lasts for 70 years after your death if it was created after 1978. If it was created prior to 1978, it gets 95 years of protection from the date of publication (which is why the Mickey Mouse cartoon “Steamboat Willie” from 1928 entered the public domain in 2024).

So there’s that. You can start the copyright process here.

WATERMARKING

Regarding watermarking artwork for website display, I never recommended this practice as it makes work look dreadful (who can really appreciate an image that has a nasty watermark over it?) and isn’t guaranteed to protect you as anyone with good Photoshop skills could edit out a watermark, most of the time. In the past, the advice I gave was to ensure that the images used on websites were sufficiently small and low-resolution that they’d be useless to anyone who wanted to use them in a way that would infringe your rights or status.

These days people are using larger images—sometimes just because they don’t know how to resize images for the web, but also because we have such large high-resolution screens now, we need larger images (and bandwidth is sufficiently fast, that we can get away with larger images). I still don’t recommend spoiling your images with watermarks because along with all the other technologies that have improved, Photoshop and AI models can now erase watermarks as well as unwanted bits of background, extend backgrounds and do all kinds of other fancy things to images for you. Your main protection from people copying an image is still to refrain from making large high-resolution versions of your artwork accessible on your website.

Some people also ask if there is a way to prevent downloading of images. There are definitely ways to easily prevent people from dragging an image off a webpage and saving it (or even right-clicking it and saving it) but it is almost impossible to prevent someone who knows their way around code to get a copy of the original image. I say almost because there are websites that use an inline embedding method that completely hides the image source (generally used by big corporations, so not a likely option for most artists). Anyway, people can take a screenshot of anything on a web page so whatever is online can be copied.

AI-POISONING

So now to this new “AI-poisoning” technology.

The email my client received linked to an article about using poisoning or masking technologies entitled: “This new data poisoning tool lets artists fight back against generative AI” (search for that if you want to find the article—for reasons that’ll be more obvious below, I’d rather not link to it directly).

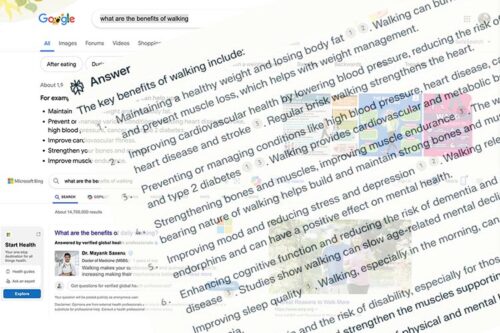

And the original email offered this image as a demonstration of the results of “poisoning”:

AN EXPERIMENT ON MIDJOURNEY

The above results for the poisoned model aren’t that ridiculous, given the prompt images used as reference but just to see for myself how or if the tools offer any protection to the artist, I took a screenshot of the image given as one that had been “poisoned” (presuming this screenshot would be free of the “poison” embedded in the original) and ran it through Midjourney to see if asking for an image like the screenshot produced weird results. Rather than using the image to create other subject matter in the style of the original, I created prompts to try and reproduce the image.

This was the original screenshot (245 x 245px—very tiny):

First I asked Midjourney to “/describe” the image. Midjourney gave me four results, as always, and I chose one of them to use to recreate the image with an /imagine prompt that began with the url of the image for reference, added the image description that I know Midjourney understands and added an —iw 2 parameter to make sure Midjourney kept as close to the original as possible.

This was my prompt:

/imagine https://media.discordapp.net/ephemeral-attachments/1092492867185950852/1234143939871641753/poisoned-original.png?ex=662fa99c&is=662e581c&hm=1cda2a5b10a66b8b9d616ebe0fc6e578769963e1b804276fa0411aa8c2b23c7f&=&format=webp&quality=lossless&width=492&height=492 A glowing sun rises over the green hills, surrounded by blue mountains and orange sand dunes. The scene is rendered in the style of pointillism with dots of varying sizes to create depth and texture. In front stands an emerald pyramid that glows brightly against the background. A large white diamond shines at its center, casting light on everything around it. This painting symbolizes joy, harmony between man’s spirit and nature, self-realization and unity with God. —iw 2

And this was the result:

The image came out a bit dotty so I tested a screenshot of the unpoisoned image using the same method and from using “/describe” from this:

I got four descriptions, one of which I used in the following prompt:

/imagine blob:https://discord.com/8ce48348-6ac3-4e79-9bbe-dce9551c431 A colorful house nestled in the heart of an enchanted forest, surrounded by vibrant trees and blooming flowers. The illustration focuses on a face in the style of Disney Pixar cartoon. It has a warm color tone, bright light, vintage look, and old school trend. The highly detailed, colorful painting has a perfect composition and is illustrated in the style of a handpainted cover art. The image is in high resolution. —iw 2

And I got these variations:

As Midjourney-ish an interpretation as that is, it didn’t have the dottiness of the first experiment and I wondered at first if that was because the poison in the original of the first one had somehow come through the screenshot (even if not to the extent of the demo images above). But the first experiment referenced pointillism explicitly (and the original is fairly dotty) and the second experiment had “The image is in high resolution” in its description.

So I tried a couple of alternate prompts for the screenshot of the “poisoned” image (using variations of the second Midjourney-created description) as follows:

/imagine https://s.mj.run/LMeOeLg2Pys A desert landscape with mountains in the background, green and blue hills in the foreground, a white glowing gemstone shining above them, orange sky, a small light at the top left corner, in the style of soft-focus tranquil pointillism painting, detailed pointillist landscapes, golden sun rays, bright colors, surrealistic fantasy. –iw 2

produced these:

/imagine https://s.mj.run/LMeOeLg2Pys A desert landscape with mountains in the background, green and blue hills in the foreground, a white glowing gemstone shining above them, orange sky, a small light at the top left corner, in the style of soft-focus tranquil landscape painting, golden sun rays, bright colors, surrealistic fantasy. –iw 2

produced these (still dotty but not so much):

I’m inclined to think that the dottiness is just a result of the dottiness of the original screenshot, not any “poisoning” remaining in the screenshot (whatever might be in the original). The original images are tiny and poor quality and I could no doubt get better results on both of these images if I sat here and modified the prompt over and over but I think this is good enough for you to see that even if you “poisoned” your images, people could still take a screenshot of what they see on your website and use that to generate something that they want using AI.

Just to be absolutely sure there’s no difference, I tried prompt asking for images usingboth screenshots for reference via this prompt:

/imagine [the screenshot, in each case] A painting of a young woman by Michael Whelan —iw 2

From the screenshot of the “unpoisoned” image I got this:

and from the screenshot of the “poisoned” image I got this:

Go figure.

WHAT “POISONING” IS REALLY TRYING TO DO

Which leaves us to consider the ethical consideration regarding these “poisoning” techniques. The article that describes the tools opens with this line:

“A new tool lets artists add invisible changes to the pixels in their art before they upload it online so that if it’s scraped into an AI training set, it can cause the resulting model to break in chaotic and unpredictable ways. ”

If you read further, you discover that what you would be doing if you used such a tool (if it works) is not protecting your art specifically, but rather, sabotaging the AI model itself. That is, while you would not actually be directly protecting your own work, you would be personally contributing to the un-doing of all the work people have put into the development of the model.

This is somewhat akin to a person who takes offense at your artwork and decides to spray-paint all over it in protest, making sure that neither they nor you nor anyone else can ever enjoy that art again (without an enormous amount of clean-up work on your part). Trying to poison the AI models is trying to destroy years and years of work by many people.

In my opinion, attempting to sabotage the AI models is an unethical practice. It’s perfectly fine to engage in the debate and get involved in establishing better protections for artists. There is a need for this and it’s in the process of being debated and sorted out. But simply taking destructive action on someone else’s work is not fine.

PROGRESS THAT’S UNDERWAY

For the record, Microsoft has announced plans to implement new AI licensing terms that would prohibit the commercial use of their models to reproduce copyrighted artwork without permission. Anthropic, the creators of the ChatGPT model, have also stated they will not allow their models to be used to generate images from copyrighted artworks. The Copyright Office, along with artist organizations, are working on developing guidelines for the ethical use of copyrighted material by AI systems. Further, the Content Authenticity Initiative, led by Adobe, is creating technical standards to help verify the provenance of digital content, including AI-generated artwork.

Artists are not fully covered yet but it looks as though protection for copyrighted work is coming and you can get behind that effort. The Copyright Office has invited your input here: Artificial Intelligence Study Submission of Comments

A FEW OTHER THINGS THAT ARE ALSO TRUE

Copying the work of other artists has historically been a way for an artist to learn technique and doesn’t necessarily mean the student artist is about to pass their work off as that of the famous artist nor that they will attempt to continue copying an accomplished artist.

Also, as you can see from my unrefined experiments above, text-to-image AI requires more than simple prompts to get something worthwhile from it.

And by the way, you can search for your images online if you want to see if anyone is using them without your permission. Just upload your image to Google image search and Google will give you all the images that look even a bit like yours. It would be tedious to do this one by one for every image you have but as I write this I’m thinking you could use an AI model to do this iteratively for you.

And that, in my opinion, is the better way to approach AI—see what you can do with it for good purposes. Use it every day in some way. You will not only get a better understanding of what AI can and can’t do but you’ll be better informed about what the risks are regarding displaying your art online. And you may very well find it beneficial to you in ways that infringe no-one’s rights!